Closing the Loop on AI Workflows

Despite having robust clients, powerful tools, and advanced models, the challenge of making these systems perform consistently as intended remains surprisingly difficult. While each component excels individually, real friction emerges during workflow iteration, particularly as requirements naturally shift over time.

Over the past year, I’ve accumulated various workflows across different platforms and tools. Each addresses specific needs and supports regular tasks. However, maintaining these workflows presents genuine challenges—updates are manual, inconvenient, and frustrating.

Real-World Example: Post-Meeting Follow-Up

A practical illustration involves a single-prompt workflow in a Claude Project that processes meeting transcripts to identify commitments and action items, then creates Todoist tasks through the Disco MCP integration.

Iterative improvements included:

- Avoiding task creation for others’ commitments

- Adding stakeholder tags

- Generating Slack message drafts

- Opening Pylon issues for specific items

The paradox: this workflow saves time, yet manually updating prompts contradicts that benefit.

Timing compounds the problem. Workflows execute during urgent moments, precisely when their effectiveness becomes clearest—and when capacity for refinement is lowest. Tools lack contextual awareness and memory; they don’t recognize failures or improve without active intervention.

The Solution: MCP-Powered Feedback Loop

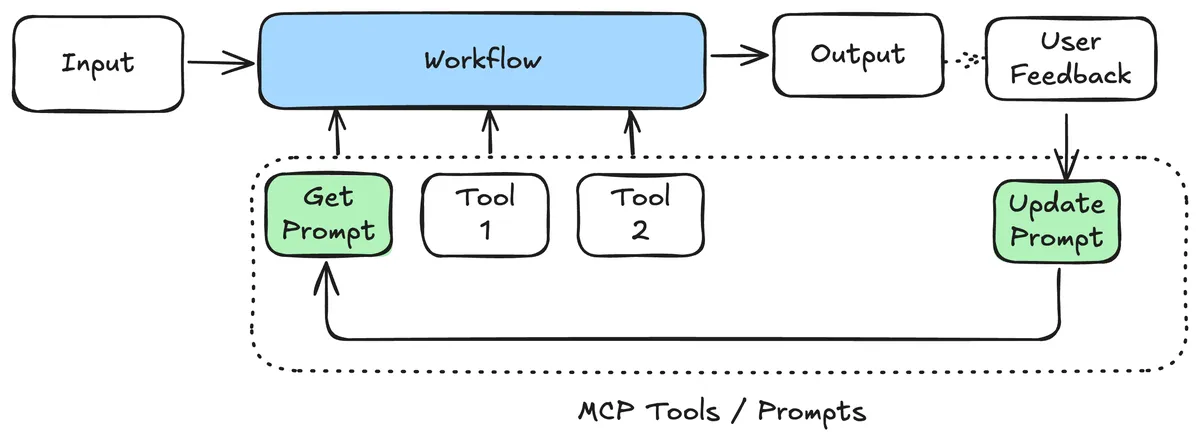

Building a feedback mechanism directly into workflows addresses these limitations. MCP’s advantages include prompt support, cross-client compatibility, and local or remote execution capability.

Architecture

The server provides three tools:

get_current_prompt– Retrieves the active system promptupdate_prompt– Modifies the system prompt with fresh instructionssubmit_feedback– Records feedback and performance metrics (future implementation)

Currently, prompt updates handle most refinements when workflows underperform. Automatic versioning tracks evolution throughout the process.

Evolution in Practice

Version history reveals the story: early iterations provided basic instructions, while later versions (like 15) contained nuanced guidance distinguishing customer commitments from internal recommendations. This clarification dramatically improved accuracy—the system previously created tasks for suggested actions rather than actual obligations.

Simplicity proves powerful: adjusting the prompt when something feels imprecise, with version history documenting the journey.

Long-Term Feasibility Assessment

Honestly, this isn’t the ideal permanent solution.

Clients themselves should ideally integrate such feedback mechanisms with appropriate safeguards and refined user experience, though this sacrifices portability. The approach also creates vulnerability to prompt injection attacks.

For low-risk workflows requiring substantial iteration, results have been genuinely impressive.

This fundamentally represents AI memory—not merely storing information, but enhancing performance progressively. It learns, adapts, and improves continuously.

The most appealing aspect? Compatibility with existing MCP tools eliminates rebuilding Jira, Todoist, or Slack integrations; simply layer feedback capabilities atop current systems.

Summary

This approach resolves workflow inconsistency—the persistent gap between desired and actual AI behavior as circumstances evolve. By implementing MCP-powered feedback mechanisms, prompts become refinable in real-time, maintain version history, and preserve consistency across platforms. While not perfect long-term, this offers practical efficiency for iterative, real-world AI workflow improvement.